Most CPG teams track share of shelf carefully. Compliance scores are reported. Field audits happen on schedule. Dashboards show healthy shelf presence across key accounts. And yet, market share data from the same period tells a different story.

The gap between what the shelf data shows and what purchase data confirms is one of the quieter execution problems in retail. It doesn't announce itself. It compounds slowly, across stores, across categories, until a quarterly review surfaces a number that nobody can immediately explain.

This blog looks at why that gap exists, what it actually reflects, and how computer vision retail metrics change the way category teams read it.

Table of contents:

- Share of Shelf vs Share of Market: When the Gap in Computer Vision Retail Metrics Actually Matters

- Key Takeaways

- Why the Most Tracked Shelf KPI is Also the Most Misread

- The Shelf Intelligence Gap That Sits Behind Every Execution Failure

- What Computer Vision Store Analytics Actually Reveals About Shelf Performance

- Turning the Gap Into a Decision With Computer Vision Retail Metrics

- The Measurement Gap is the Strategy Gap

Why the Most Tracked Shelf KPI is Also the Most Misread

Share of shelf is the percentage of physical shelf space a brand occupies within a category, relative to all competing brands on the same fixture. It is one of the most tracked KPIs in retail execution - and one of the most misread.

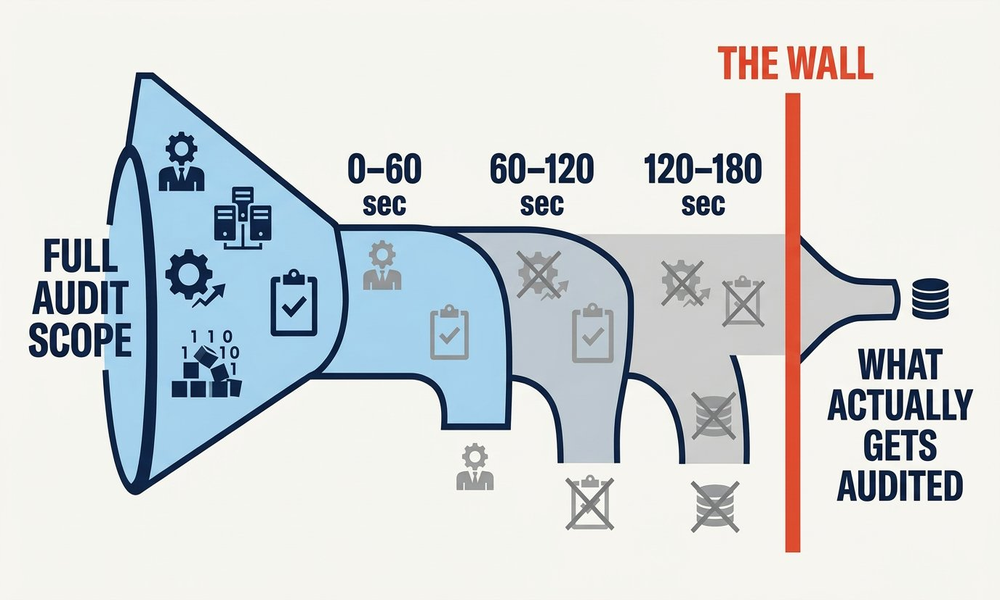

The problem starts with how it gets measured. When field reps count facings manually across hundreds of outlets, they work under time pressure, without a consistent methodology, and without any visibility into what competitors are doing on the same shelf at the same moment. Two reps visiting the same store in the same week can return different numbers. Those numbers feed the same dashboard and inform the same decisions.

The result is a share of shelf figure that reflects effort, not reality. Common ways the number gets distorted:

- Phantom facings: Shelf space is allocated on the planogram but the product is absent or nearly depleted. The facing is counted, the sale is not made.

- Competitor creep: A competing brand quietly expands into adjacent shelf space between visits. Manual audits miss it entirely because they only photograph their own section.

- Reset drift: After a promotional reset, products shift position. Counts from the previous visit are carried forward without anyone verifying the new arrangement.

Also Read: On-Shelf Availability: Why Products Go Missing at the Shelf

The Shelf Intelligence Gap That Sits Behind Every Execution Failure

Most share of shelf discrepancies trace back to a gap in shelf intelligence - the ability to see what is actually happening on the fixture, in real time, across every store in the network.

Without it, category managers find out about execution failures weeks after they occurred, through a field audit that captures a single snapshot of a single store on a single day. By then the promotional window is closed, the lost sales have accumulated, and corrective action addresses a problem that has already moved on.

McKinsey's research shows that 70% of large-scale change programs fail to reach their stated goals - not because the strategy is wrong, but because day-to-day execution cannot keep up with real-world complexity. Shelf execution programs follow the same pattern precisely.

What makes this particularly damaging for the share of shelf vs market share relationship is that execution failures do not distribute randomly. They cluster around high-competition fixtures, high-velocity categories, and stores where field rep coverage is thinnest - precisely the locations where shelf intelligence matters most and where the market share consequences of getting it wrong are largest.

What Computer Vision Store Analytics Actually Reveals About Shelf Performance

The difference between manual shelf measurement and computer vision store analytics is not speed. It is the quality and completeness of what gets measured in the first place.

When shelf images are processed through computer vision at scale, three things become possible that manual methods structurally cannot replicate:

1. Share is calculated against actual competitor presence

Manual audits focus on a brand's own products. Computer vision store analytics processes the entire shelf image - which means share of shelf is calculated relative to what every competing brand actually occupies at that moment, not against an estimated category total. If a competitor expands two facings across six stores over three weeks, that trend appears in the data before it registers in market share numbers.

2. Conditions are timestamped and trend-able

Every image processed through computer vision store analytics carries a time and location stamp. Share of shelf becomes a trend line rather than a point-in-time estimate. When that trend line diverges from market share movement across the same stores and the same period, the correlation is visible and investigable - not speculative.

3. Root cause becomes separable from symptom

A market share decline has many possible causes. Computer vision retail metrics let category teams isolate the shelf execution variable with precision. If share of shelf is stable and market share is falling, execution is not the issue. If both are declining in the same stores at the same rate, it is - and the fix is operational rather than strategic.

.png)

Turning the Gap Into a Decision With Computer Vision Retail Metrics

Not every gap between share of shelf and market share demands immediate action. Some variance is structural. A brand with strong trade investment but inconsistent replenishment will consistently hold more shelf space than its purchase conversion justifies in the short term. The question is whether a gap represents a recoverable execution failure or a deeper commercial imbalance - and that distinction requires data, not instinct.

Consistent retail shelf monitoring at the frequency that computer vision enables gives category teams the framework to make that distinction with evidence. The shelf data tells you what is physically happening. The market share data tells you what shoppers are doing in response. When both are captured with the same frequency and the same granularity, the relationship between them becomes readable.

The scenarios where the gap most reliably signals an actionable execution problem:

- Share of shelf rising, market share flat or falling: Space is being won but not converted. The investigation should focus on on shelf availability, facing position, and product adjacency within the fixture - not on distribution or pricing.

- Share of shelf falling, market share stable: The brand is converting efficiently but the position is fragile. A competitor gaining ground is not yet hurting purchase. That trajectory will not hold indefinitely.

- Both declining together in the same region: A coordinated execution failure. Field rep coverage and retail shelf monitoring cadence in those specific outlets should be the immediate priority.

.png)

The Measurement Gap is the Strategy Gap

The gap between share of shelf and share of market has always existed. What determines whether it costs a brand one quarter or several is how quickly it gets seen, understood, and addressed.

Computer vision retail metrics make the relationship between shelf presence and market performance measurable in something close to real time. Market share data tells you what happened. Shelf data tells you why. When both move together, execution is working. When they diverge, something in the store is breaking down - and the shelf intelligence will show you exactly where.

For CPG category managers, the practical shift is this: stop treating share of shelf as a standalone KPI and start reading it alongside on shelf availability, retail shelf monitoring cadence, and market share movement in the same stores over the same windows. That combination, tracked consistently through computer vision retail insights, is what turns shelf data from a reporting exercise into a competitive advantage

.png)

%20(1).png)